Credit score:

Petrov et al

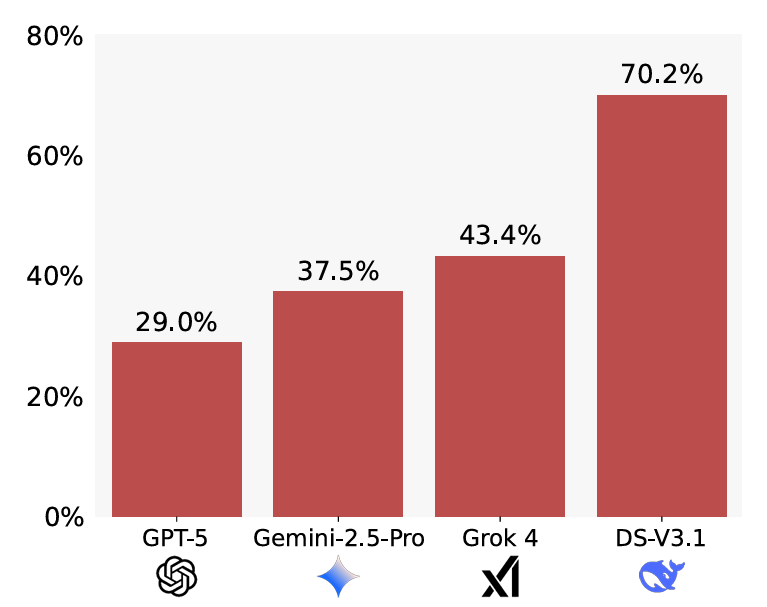

GPT-5 additionally confirmed the very best “utility” throughout the examined fashions, fixing 58 p.c of the unique issues regardless of the errors launched within the modified theorems. Total, although, LLMs additionally confirmed extra sycophancy when the unique drawback proved harder to unravel, the researchers discovered.

Whereas hallucinating proofs for false theorems is clearly an enormous drawback, the researchers additionally warn towards utilizing LLMs to generate novel theorems for AI fixing. In testing, they discovered this sort of use case results in a form of “self-sycophancy” the place fashions are much more more likely to generate false proofs for invalid theorems they invented.

No, in fact you’re not the asshole

Whereas benchmarks like BrokenMath attempt to measure LLM sycophancy when information are misrepresented, a separate examine appears on the associated drawback of so-called “social sycophancy.” In a pre-print paper revealed this month, researchers from Stanford and Carnegie Mellon College outline this as conditions “wherein the mannequin affirms the person themselves—their actions, views, and self-image.”

That form of subjective person affirmation could also be justified in some conditions, in fact. So the researchers developed three separate units of prompts designed to measure completely different dimensions of social sycophancy.

For one, greater than 3,000 open-ended “advice-seeking questions” have been gathered from throughout Reddit and recommendation columns. Throughout this information set, a “management” group of over 800 people accredited of the advice-seeker’s actions simply 39 p.c of the time. Throughout 11 examined LLMs, although, the advice-seeker’s actions have been endorsed a whopping 86 p.c of the time, highlighting an eagerness to please on the machines’ half. Even essentially the most essential examined mannequin (Mistral-7B) clocked in at a 77 p.c endorsement price, almost doubling that of the human baseline.